Hey everyone. as you may already know, starting from V1.20, Kubernetes will be deprecating Docker runtime engines, meaning that this version will be the last one to support Docker, and the next future release will no longer be supporting it, as it’s not a CRI-compliant (Container Runtime Interface) engine. In this tutorial, I will demonstrate how to use other alternatives, like CRI-O runtime engine. I assume that you are already familiar with Kubernetes deployments, so, I will directly head into the points of focus,

CRI-O must run the same version as the deployed Kubernetes, meaning, if you are intending to deploy Kubernetes version 1.20, CRI-O must also be at the same release, 1.20.

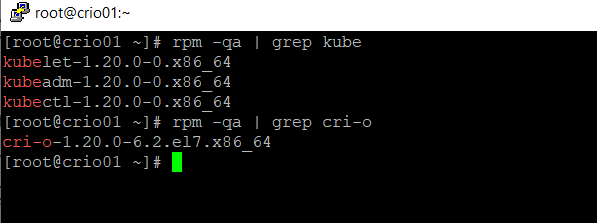

Used versions:

- OS: Centos 7 (7.9)

- Kubernetes: V1.20

- CRI: CRI-O v1.20

- CNI plugin: Calico

All of the following steps are included in the following video tutorial

The operating system I’m using is a fresh installation. Should you have other container runtime engines installed, please remove first. I will be using few virtual machines hosted on VMware ESXi 6.7 for this demo, it would be better if you take a snapshot prior to all of the mentioned actions in this article.

This guide of steps, and scripts were prepared from the product documentation, and tested on CentOS 7, so, it will make your life much easier. For other OS versions, please refer to the relevant documentation to make the required changes.

Now, let’s begin the preparation and deployment.

1- use the following script to update, prepare, and install all of the required binaries for our setup. I will put this script on all of the nodes that will participate in the K8S cluster (including the master nodes).

Again, make sure you install both Kubernetes and CRI-O at the same version. I’m using v1.20 in this demo. set the OS version, and other component versions in the following script. This script is already pre-configured to install Kubernetes and CRI-O v1.20 on Centos 7 version.

##Update the OS

yum update -y

## Install yum-utils, bash completion, git, and more

yum install yum-utils nfs-utils bash-completion git -y

##Disable firewall starting from Kubernetes v1.19 onwards

systemctl disable firewalld --now

## letting ipTables see bridged networks

cat <<EOF | sudo tee /etc/modules-load.d/k8s.conf

br_netfilter

EOF

cat <<EOF | sudo tee /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

sudo sysctl --system

##

## iptables config as specified by CRI-O documentation

# Create the .conf file to load the modules at bootup

cat <<EOF | sudo tee /etc/modules-load.d/crio.conf

overlay

br_netfilter

EOF

sudo modprobe overlay

sudo modprobe br_netfilter

# Set up required sysctl params, these persist across reboots.

cat <<EOF | sudo tee /etc/sysctl.d/99-kubernetes-cri.conf

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-ip6tables = 1

EOF

sudo sysctl --system

###

## configuring Kubernetes repositories

cat <<EOF | sudo tee /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://packages.cloud.google.com/yum/repos/kubernetes-el7-\$basearch

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://packages.cloud.google.com/yum/doc/yum-key.gpg https://packages.cloud.google.com/yum/doc/rpm-package-key.gpg

exclude=kubelet kubeadm kubectl

EOF

## Set SELinux in permissive mode (effectively disabling it)

sudo setenforce 0

sudo sed -i 's/^SELINUX=enforcing$/SELINUX=permissive/' /etc/selinux/config

### Disable swap

swapoff -a

##make a backup of fstab

cp -f /etc/fstab /etc/fstab.bak

##Renove swap from fstab

sed -i '/swap/d' /etc/fstab

##Refresh repo list

yum repolist -y

## Install CRI-O binaries

##########################

#Operating system $OS

#Centos 8 CentOS_8

#Centos 8 Stream CentOS_8_Stream

#Centos 7 CentOS_7

#set OS version

OS=CentOS_7

#set CRI-O

VERSION=1.20

# Install CRI-O

sudo curl -L -o /etc/yum.repos.d/devel:kubic:libcontainers:stable.repo https://download.opensuse.org/repositories/devel:/kubic:/libcontainers:/stable/$OS/devel:kubic:libcontainers:stable.repo

sudo curl -L -o /etc/yum.repos.d/devel:kubic:libcontainers:stable:cri-o:$VERSION.repo https://download.opensuse.org/repositories/devel:kubic:libcontainers:stable:cri-o:$VERSION/$OS/devel:kubic:libcontainers:stable:cri-o:$VERSION.repo

sudo yum install cri-o -y

##Install Kubernetes, specify Version as CRI-O

yum install -y kubelet-1.20.0-0 kubeadm-1.20.0-0 kubectl-1.20.0-0 --disableexcludes=kubernetes

2- after running the script, make sure that the installed versions of Kubernetes and CRI-O are matching.

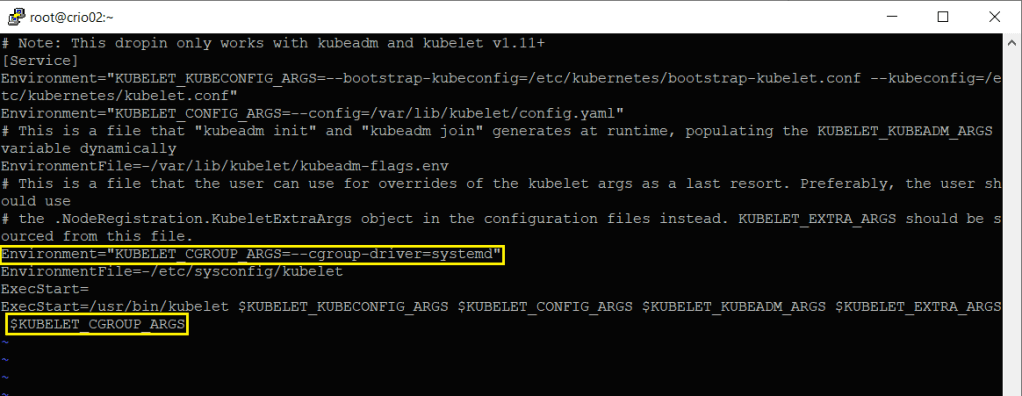

3- Couple of modifications should be made to kubelet service config to make it work fine with CRI-O, hoping that this would be automatically fixed in future releases of Kubernetes. CRI-O uses “systemd” as the cgroup driver. Follow these instructions carefully. Edit this file at “/usr/lib/systemd/system/kubelet.service.d/10-kubeadm.conf”, and make sure you add the highlighted lines as indicated below.

# Note: This dropin only works with kubeadm and kubelet v1.11+

[Service]

Environment="KUBELET_KUBECONFIG_ARGS=--bootstrap-kubeconfig=/etc/kubernetes/bootstrap-kubelet.conf --kubeconfig=/etc/kubernetes/kubelet.conf"

Environment="KUBELET_CONFIG_ARGS=--config=/var/lib/kubelet/config.yaml"

# This is a file that "kubeadm init" and "kubeadm join" generates at runtime, populating the KUBELET_KUBEADM_ARGS variable dynamically

EnvironmentFile=-/var/lib/kubelet/kubeadm-flags.env

# This is a file that the user can use for overrides of the kubelet args as a last resort. Preferably, the user should use

# the .NodeRegistration.KubeletExtraArgs object in the configuration files instead. KUBELET_EXTRA_ARGS should be sourced from this file.

## The following line to be added for CRI-O

Environment="KUBELET_CGROUP_ARGS=--cgroup-driver=systemd"

EnvironmentFile=-/etc/sysconfig/kubelet

ExecStart=

ExecStart=/usr/bin/kubelet $KUBELET_KUBECONFIG_ARGS $KUBELET_CONFIG_ARGS $KUBELET_KUBEADM_ARGS $KUBELET_EXTRA_ARGS $KUBELET_CGROUP_ARGS

THe file should look like this one

4- Now, let’s reload daemon and then start CRI-O and kubelet services.

systemctl daemon-reload

systemctl enable crio --now

systemctl enable kubelet --now

5- repeat all of the previous steps to all of the nodes you are intending to join to the cluster

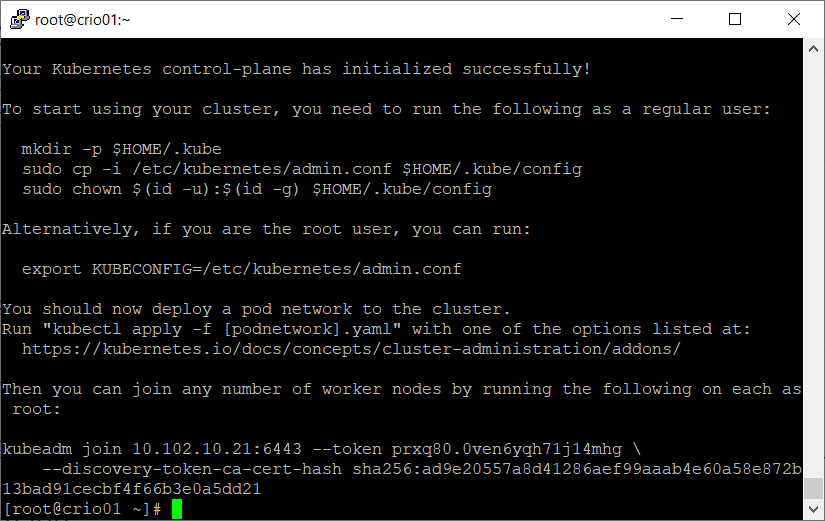

6- initiate the cluster using kubeadm method

kubeadm init --pod-network-cidr=10.244.0.0/16

7- after having a successful initiation. add env variable to the path of the KUBECONFIG file

export KUBECONFIG=/etc/kubernetes/admin.conf

Don’t forget to take a copy of the join token command to add worker nodes to the cluster, otherwise, you’d need to regenerate aother token later

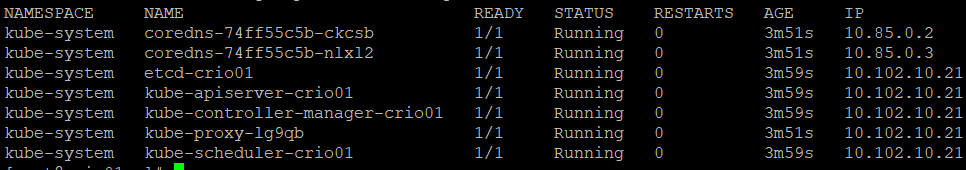

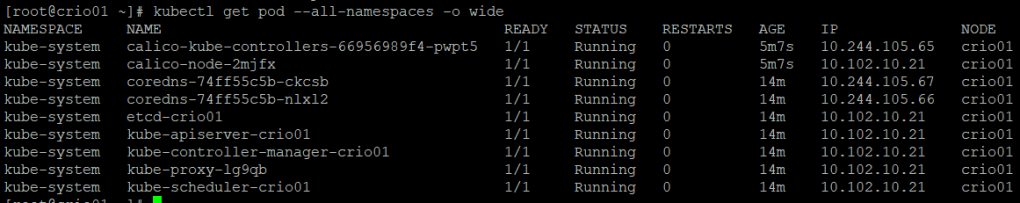

8- let’s examine the cluster and check the status of control plane pods

9- Let\s install Calico as our CNI plugin.

curl https://docs.projectcalico.org/manifests/calico.yaml -O

kubectl apply -f calico.yaml

Check the status of the newly deployed components of Calico

The pods seem to be in a good condition, however, the assigned IP addresses are still assigned by CRI-O, not Calico. Reboot the machine and then check again.

After the reboot, everything is working fine, and the pods (calico-node, and CoreDNS) have obtained their IPs from calico

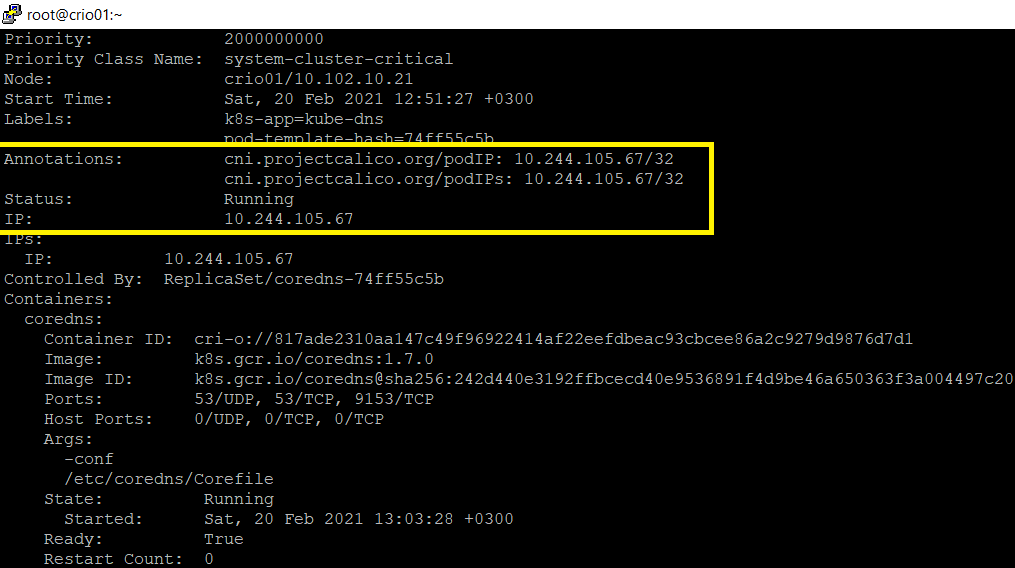

To make sure that your pod is obtaining IP from the correct CNI, just examine the pod and check the annotations. For this example, I will be examining one of coredns pods

kubectl describe pod coredns-74ff55c5b-ckcsb -n kube-system | more

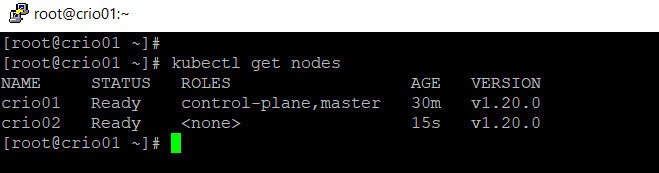

10- Let’s join worked nodes to the cluster. Follow the same steps from 1 to 4 to prepare other nodes. Then, use the join command to join to the cluster

11- Check the status of the newly joined node(s)

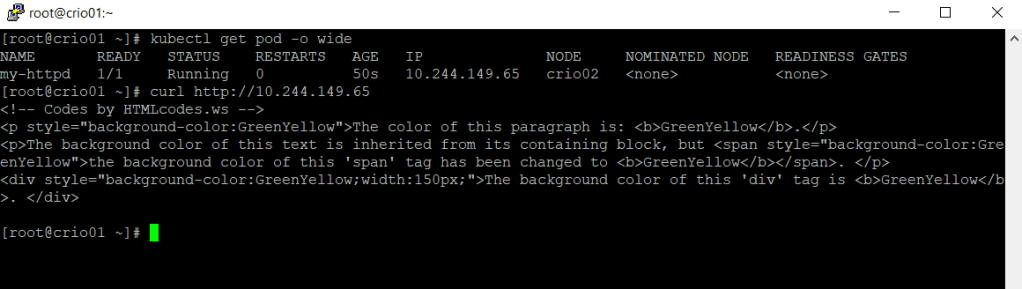

Great. Now. let’s create a test pod to make sure that it works properly. You can use the following pod image, or use whichever you’d like to

kubectl run my-httpd --image mroushdy/my-httpd:green

For kubectl auto-completion for the command line, follow the steps in this guide here.

I hope this has been informative 🙂